How to Leverage Llama Models for Knowledge Management in 2026

Introduction to Llama Models for Knowledge Management

As organizations seek innovative solutions, exploring Llama models for knowledge management 2026 can significantly enhance data handling and decision-making processes.

In 2026, businesses are increasingly leveraging Llama models for knowledge management. Developed by Meta AI, Llama models (Large Language Model Meta AI) are some of the most innovative open-weight generative AI solutions available today. They provide unmatched flexibility, customization, and multilingual capabilities, making them ideal for constructing AI-powered knowledge repositories, automating content creation, and enhancing data access for enterprises of all sizes.

By following this guide, you’ll learn how to use Llama models to streamline your organization’s knowledge management processes, improve team collaboration, and unlock valuable insights from your internal and external data.

Prerequisites for Implementing Llama Models

Before integrating Llama models into your knowledge management system, ensure you meet the following requirements:

- Technical Expertise: Basic to intermediate knowledge of machine learning concepts, Python, and frameworks like PyTorch will be helpful. For fine-tuning, familiarity with datasets and model training is recommended.

- Compute Resources: If deploying locally, you’ll need robust GPU-based hardware. Cloud infrastructure such as AWS, Google Cloud, or Azure can also be used for deployment.

- Access to Training Data: Prepare relevant datasets, such as internal documentation, reports, or previously documented queries, for fine-tuning the model.

- Licensing: Understand Meta’s licensing policies for commercial applications. While basic models are free for non-commercial use, businesses may need to obtain a commercial license. Verify current licensing terms here.

- Development Tools: Set up supporting tools such as a Python development environment, cloud-based or local hosting tools, and integration platforms like Hugging Face or Zapier AI.

Step-by-Step Guide: Leveraging Llama Models for Knowledge Management

Follow these steps to implement Llama models and build a robust knowledge management system.

-

Download Llama Models

Access the Llama models from the official Meta GitHub repository. Choose the version most suitable for your organization based on the model size and compute capacity. Llama 2 models with parameter sizes ranging from 7B to 70B are available for download.

-

Prepare Your Dataset

Curate a clean and structured dataset with your company’s internal knowledge, such as past documents, FAQs, customer data, and marketing strategies. Ensure all data is formatted properly and free of sensitive information that should not be fed into the model.

-

Fine-Tune the Model

Using machine learning frameworks like PyTorch or platforms like Hugging Face, fine-tune the pre-trained Llama model using your dataset. This step ensures the AI is optimized for your industry-specific or organizational-specific knowledge management needs.

-

Set Up Infrastructure for Hosting

Decide on either local (on-premise servers) or cloud-based deployment (e.g., AWS Sagemaker). Configure your hosting environment to handle the model’s size and ensure APIs are set up for future integrations with knowledge management tools such as Notion or Confluence.

-

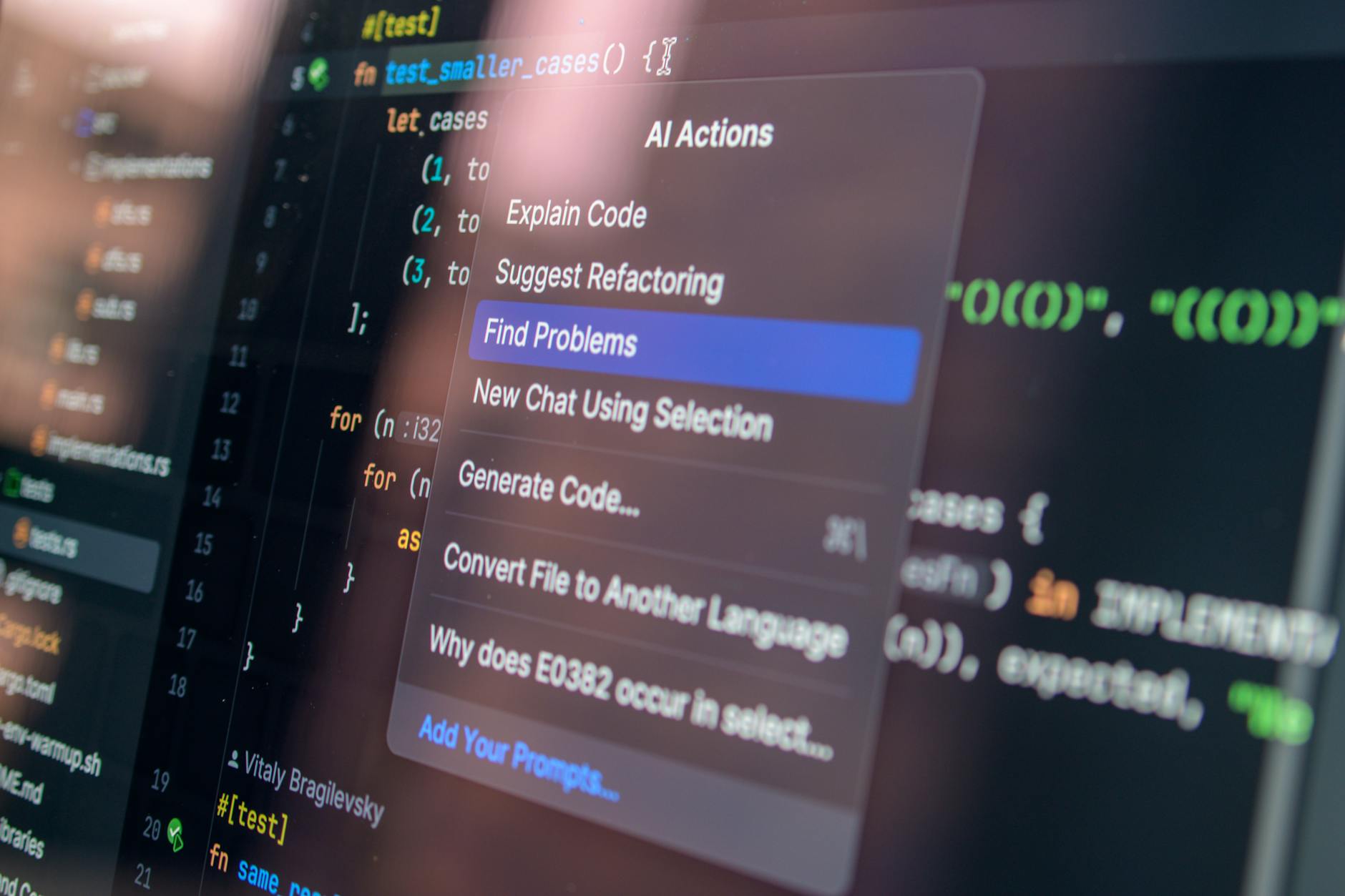

Integrate with Knowledge Management Tools

Once deployed, connect Llama models to existing digital tools using platforms like Zapier AI, Make.com, or custom APIs. For instance, integrate it with customer service CRM platforms for dynamic query responses or CMS platforms for automated content recommendations.

-

Monitor and Test Results

Continuously monitor the model’s performance using test cases such as knowledge search accuracy and natural language query effectiveness. Use feedback to improve fine-tuning and update the model periodically with new datasets.

Common Issues & Fixes

Working with Llama models for knowledge management might introduce specific challenges. Here’s how you can address them:

- Issue: High infrastructure costs for large models like Llama 2 70B.

Fix: Opt for smaller, resource-efficient versions like Llama 7B, which provide good performance with lower hardware demands.

- Issue: Inconsistent results during fine-tuning.

Fix: Pre-process your datasets to ensure they are clean, labeled, and structured explicitly for the knowledge management tasks you aim to optimize.

- Issue: Difficulty integrating Llama models with existing systems.

Fix: Use integration tools like Zapier AI or enlist the help of professional developers familiar with AI integration for enterprise systems.

Pro Tips for Success

To get the most out of Llama models for knowledge management in 2026, keep these tips in mind:

- Regularly update and validate your training datasets to ensure consistency and relevancy.

- Use Hugging Face for ease of deployment and ongoing model hosting, especially if you lack in-house compute infrastructure.

- Combine Llama’s multilingual capabilities with global datasets to serve multinational teams or customer bases effectively.

- Measure ROI by comparing time saved or improved accessibility benefits using Llama-integrated tools versus traditional knowledge repositories.

Next Steps

After implementing and fine-tuning a Llama model for knowledge management, consider expanding its use across other functions in your organization. For instance, automate your market research processes, deploy AI-driven customer support chatbots, or use the models for real-time translation for international clients. Follow Meta’s updates on model advancements and stay informed on future releases to leverage the latest AI capabilities.

FAQ

What is Llama, and how does it help in knowledge management?

Llama is a series of open-weight large language models by Meta AI. It enables organizations to structure, search, and analyze internal knowledge more efficiently, making it a valuable component for knowledge management systems.

Can I use Llama models for free in my business?

Llama models are free for non-commercial purposes, but businesses need a paid license for commercial use. Make sure to verify current pricing on Meta’s website.

What kind of infrastructure is necessary for deploying Llama models?

You’ll need GPU-supported local servers for larger models or a reliable cloud hosting service like AWS to deploy Llama models effectively. Choose based on your organization’s scale and budget.

How do I prepare datasets for fine-tuning Llama models?

Ensure the datasets are clean, formatted consistently, and focused on the knowledge areas you aim to enhance. Use tools like PyTorch or Hugging Face for fine-tuning.

Which platforms support Llama model integrations for knowledge management?

Platforms like Hugging Face, AWS Sagemaker, Notion, Confluence, Zapier, and Make.com can integrate seamlessly with Llama for optimized knowledge sharing and retrieval tasks.

Last tested: April 20, 2026

For any commercial licensing inquiries, visit Meta’s official research page.

“`